Cloud, Cybersecurity & DevOps with AI

Cloud, Cybersecurity & DevOps with AI

No prior experience required — just commitment, guidance, and real-world training.

Designed and led by industry experts in cloud, security, and AI — aligned with real employer demand.

Career Programs

Career Programs

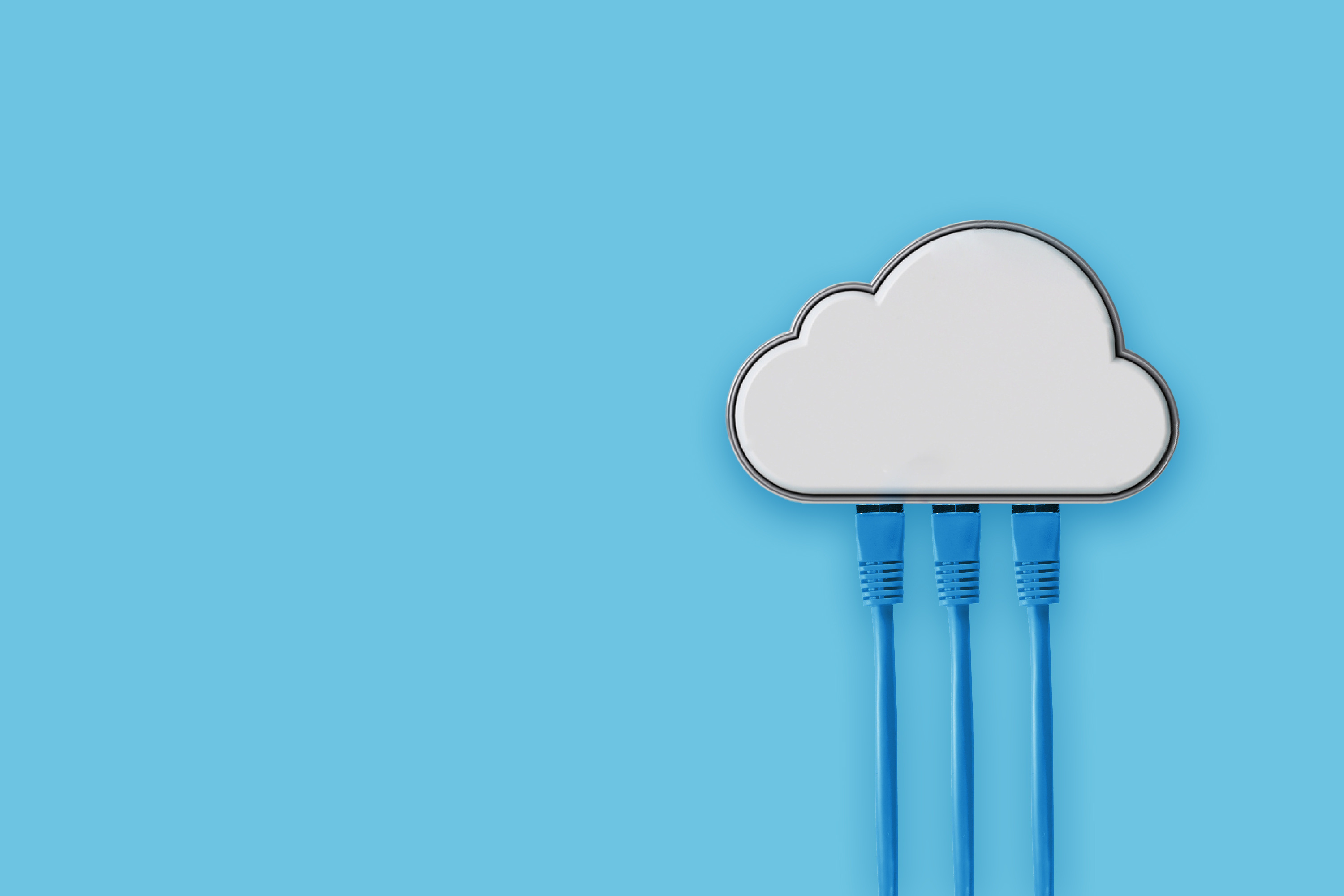

Choose a guided pathway that takes you from zero experience to job-ready skills in just a few months.

Learn core AWS services, Linux, networking, and automation to qualify for Cloud Support and Cloud Engineer roles. Ideal for complete beginners.

View Program

Master CI/CD, containers, automation, and cloud-native tooling to support modern DevOps teams and pipelines.

View Program

Learn foundational cybersecurity concepts, log analysis, SIEM tools, MITRE ATT&CK, and incident response to qualify for SOC Analyst Tier 1 roles. Designed for beginners with no prior IT or security experience.

View Program

Learn how to use AI tools for workplace communication, documentation, ticket handling, and productivity to qualify for customer support, admin, operations, or helpdesk roles. Perfect for beginners and career changers.

View Program

You don’t have to struggle alone — our mentors and community walk with you from day one.

Learn how to prepare for interviews, build experience, and navigate the job market after training.

You can change your life with the right skills, guidance, and support. At Cloud Technology Experts, we combine practical training, mentorship, interview prep, and community — so you’re not just taking another course, you’re building a new career.